In some of the earlier episodes of “the history of things”, we looked at curiosities ranging from Victorian self-defense devices to ultra-miniature vacuum tubes. But in today’s installment, I’d like to talk about an artifact of a much more recent vintage.

Many readers will recall that in the early 2010s, we were in the heyday of the internet-of-things (IoT) craze. It wasn’t just phones or cars that were getting online; tiny networked computers started cropping up in lightbulbs, thermostats, coffee makers, and vegetable juicers.

The man behind the curtain was ARM Holdings: a little-known company that designed a series of low-power 32-bit CPU cores and then started cheaply licensing them to anyone who wanted to make their own chips. Their early CPUs were by no means great — but they were good enough. All of sudden, you could put Linux and wi-fi inside just about anything, and it only cost you a buck or five.

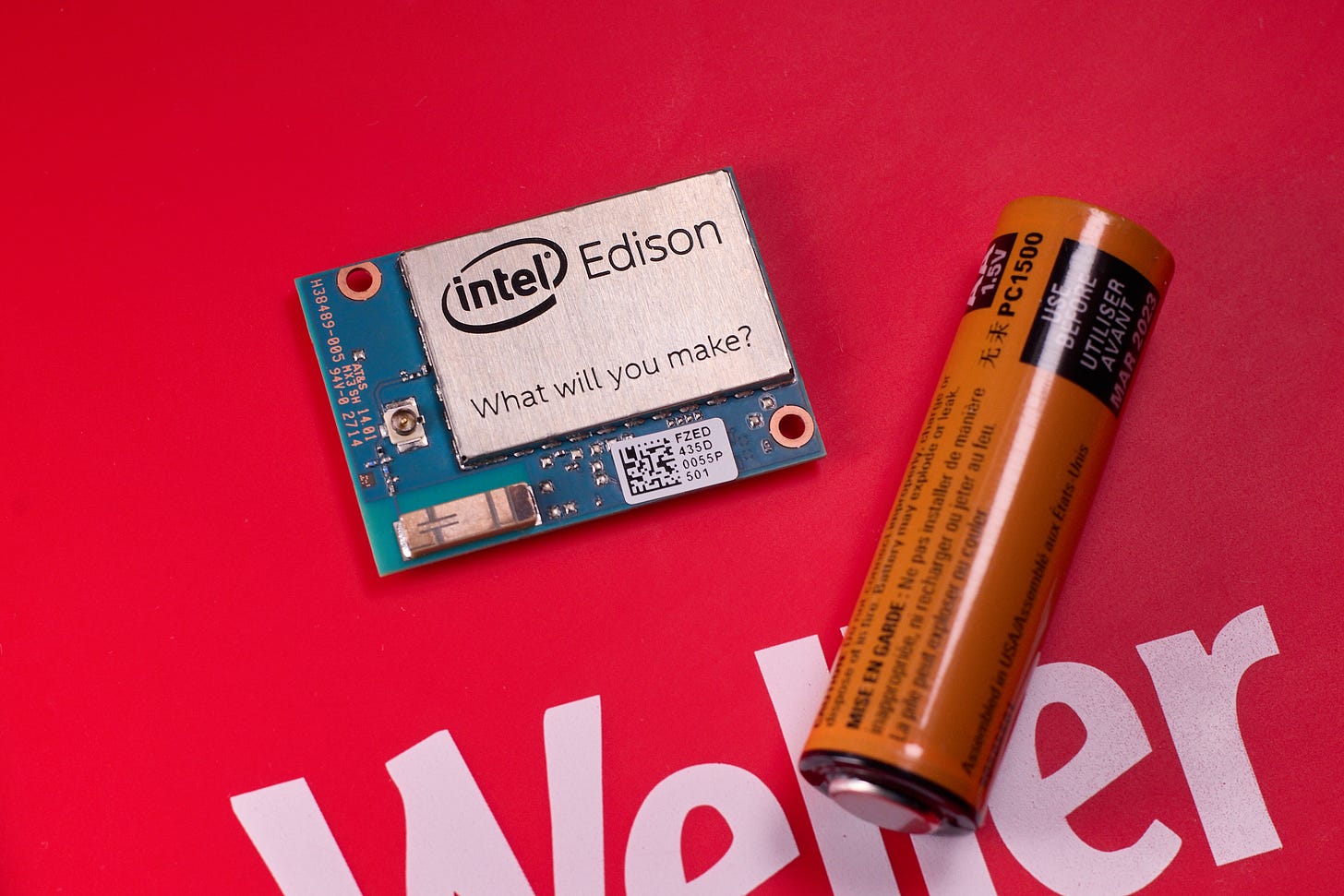

At the time, Intel — the dominant manufacturer of desktop and server processors — was still singularly focused on high-performance devices, including their failed bet on IA-64. But by 2013, they decided they wanted in on the IoT game and released a stripped-down, low-power x86 processor dubbed Intel Quark. The centerpiece of their Quark ecosystem was supposed to be Intel Edison — a wi-fi-enabled dual-core Linux system the size of a post stamp, retailing for about $50:

The device ultimately shipped with a different a different microarchitecture — Silvermont — but it was still selling the same idea: that Intel was a worthwhile alternative to ARM in the embedded space. The platform was ostensibly marketed to hobbyists, and this made a lot of sense: many IoT products were coming out of small shops started by enthusiasts, and hobby single-board computers such as Raspberry Pi or Beagleboard provided a natural on-ramp for ARM devices. But Intel was also pitching it as a “product-ready” module — an idea embodied by the inexplicable “Intel Smart Mug”, showcased during their 2014 CES keynote:

The “product-ready” pitch for Edison wasn’t compelling, especially since it coincided with Espressif releasing a series of wi-fi microcontrollers that retailed for less than one tenth the price. But for hobbyist uses, the module was nothing short of revolutionary: it was much smaller and power-efficient than any other hobby single-board computer you could buy at the time.

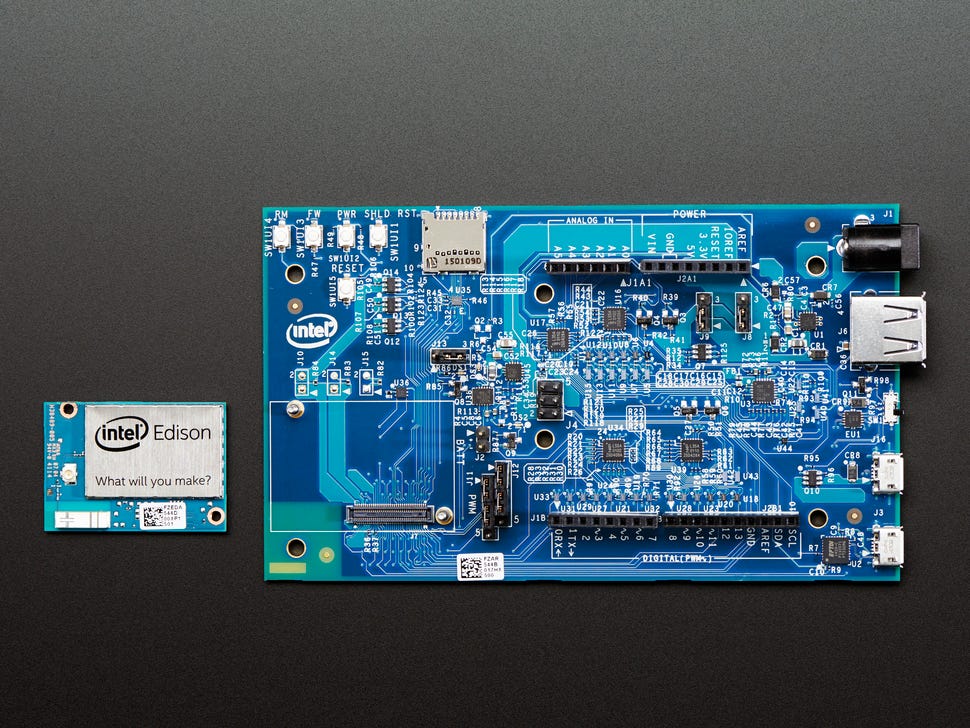

But then, Intel fumbled everything else. To use Edison in hobby applications, you needed a breakout board for the subminiature Hirose DF40 connector in the back. Passive breakout boards were tricky to use because the chip could only tolerate 1.8V on GPIO pins; and the primary board marketed by Intel was a $80 “faux Ardino” monstrosity that effectively obliterated all of Edison’s price or size advantages:

It was a death of a thousand cuts. The release-day documentation for Edison was lacking and hard to find on their own website; the factory-preloaded Linux distro was broken so badly that it didn’t even persist wi-fi settings across reboots. Instead of polishing the out-of-box experience, Intel ended up getting sidetracked by weird corporate partnerships. For example, they pursued the support for Mathematica’s Wolfram language — something that I imagine appealed to roughly one user: Stephen Wolfram himself.

Just three years later, Edison was no more. With it, died the small dream of having an expressive assembly language on embedded CPUs.

I’m speculating that Intel, remembering the IA-64 fiasco, went even harder on all its organizational pathologies with Edison. “Our mistake with IA-64 was insufficient bureaucracy; let’s have more oversight with Edison”. It’s an age-and-size sclerosis that is very common and is extremely difficult to defeat.

Dig the article! Not that it's exactly related, but the dude over at The Chip Letter has a piece about how Intel's x86 moat became a prison - https://open.substack.com/pub/thechipletter/p/the-paradox-of-x86. It's apropos of very little, but it touches on some of Intel's missteps trying to compete outside the server/desktop space.